Deepfake remote workers from North Korea have been making cybersecurity headlines for a while now. Using fabricated identities, AI-generated résumés, and even deepfaked video calls, these malicious agents secure jobs at Western companies. Once inside, they operate like trusted employees while carrying out their true mission: stealing sensitive intellectual property and exfiltrating customer data.

What makes this new threat so dangerous is that it blurs the line between insider risk and external attack. On the surface, these individuals appear to be legitimate staff members with proper access, yet behind this facade are highly skilled agents with malicious intentions.

Preventing data exfiltration across these data loss venues is hard enough as it is, making the hybrid nature of this threat even harder to stop before the damage is done – especially with traditional security tools.

In this blog, we’ll explore the rise of this new threat, why it represents a unique challenge to security teams, and outline how context-aware Data Loss Prevention (DLP) tools can help you stay ahead of this threat.

The Rise of Deepfake Remote Workers

Over the past several years, U.S. and European authorities have issued repeated warnings about North Korean operatives posing as remote tech workers. Their goal is twofold: earn foreign currency for the regime despite sanctions, and gain access to sensitive corporate systems that can be exploited for espionage or sold on criminal markets.

The scale and impact of this threat are no longer theoretical. CrowdStrike found over 320 incidents in the past 12 months where North Korean operatives obtained remote jobs at Western companies using deceptive identities and AI tools. That’s a 220% increase from the previous year.

In May 2025, U.S. officials revealed that North Korean operatives had compromised dozens of Fortune 500 companies, using fraudulent remote hires to siphon sensitive corporate data and intellectual property back to Pyongyang. This was not a one-off tactic, but a systematic infiltration campaign targeting some of the world’s largest enterprises.

In one notable example, KnowBe4’s HR team hired a software engineer back in 2024 who turned out to be a North Korean fake IT worker who immediately manipulated session history files, transferred potentially harmful files, and executed unauthorized software as soon as he got his Mac workstation, but was caught before too much damage was done.

In another case from June 2025, a group posing as blockchain developers infiltrated a crypto platform (Favrr) through 31 fake identities, advanced identity deception techniques, and stealthy remote access tools, pulling off a $680K crypto heist.

It’s clear that North Korea is actively using remote employment as a vector for data exfiltration. Unlike conventional phishing or malware campaigns, this strategy weaponizes corporate trust, embedding adversaries directly into the workforce. For security teams, the challenge is no longer just about keeping attackers out of the perimeter; it’s about recognizing when the attacker is already “inside,” disguised as a colleague.

Why This Threat is Unique

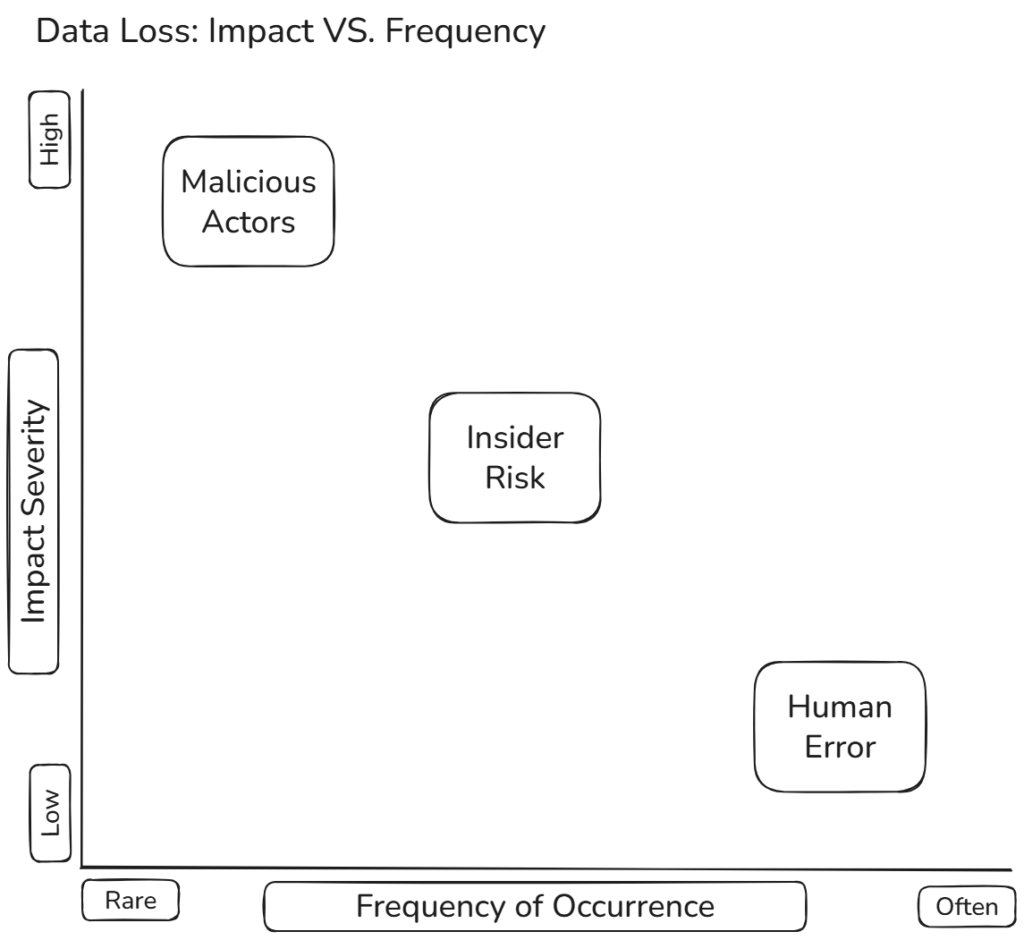

In our previous article on the three data loss hazards, we described the classic categories security teams face: human error, insider risk, and external attackers. Deepfake remote workers are a unique case in this regard – they begin as external adversaries but, once hired, become indistinguishable from insiders.

This hybrid nature is precisely what makes the problem so difficult:

Unlike a careless employee or even a disgruntled insider, these operatives are mission-driven from day one – every credential, system, and dataset they obtain is used for exploitation.

Unlike smash-and-grab attackers, North Korean operatives are willing to play the long game -working quietly for months, building trust, and extracting value without tripping obvious alarms.

From the defender’s side, traditional tools are designed to detect either insiders or outsiders, not both at once. A fake employee who is actually a foreign adversary doesn’t fit neatly into existing categories, creating a blind spot that static rules, basic anomaly detection, and perimeter defenses cannot cover.

Let’s look into that a little deeper, specifically in the context of DLP tools –

The DLP Challenge

Data Loss Prevention (DLP) tools were never built for adversaries who look like employees. Most solutions are tuned for one of three scenarios: stopping accidental leaks from well-meaning staff, preventing data exfiltration by ‘amateur’ insiders (e.g., stealing leads before leaving the company), or blocking clear signs of an external exfiltration attack. Usually, most tools aren’t even equipped to handle these scenarios effectively, let alone this new threat.

Static DLP policies like “block uploads over 50MB” or “alert on large downloads” don’t help when the operative’s role legitimately involves handling large volumes of sensitive data. Similarly, keyword- or pattern-based detection fails because the data movement appears to be business-related. By the time a spike in activity is noticed, the data may already be gone.

Trying to prevent something like this from happening usually results in either a flood of false positives or missed detections. What’s missing, is a true understanding of context.

Automated, Contextual DLP

Traditional DLP relies on static rules and policies that treat all data movement the same. In reality, not every file transfer or database query carries the same risk. The difference between a legitimate business action and malicious exfiltration often lies in the why, not the what.

A different, more suitable approach to solving this issue is through automated, contextual DLP.

At ORION, we use a set of AI agents that learn the natural flow of data across your organization – which teams normally access which systems, how files are typically shared, and where data is expected to go. Understanding both the source of the data and the context of the action can make sense of behavior in ways that manually defining policies never could.

This allows us to detect when:

- A developer suddenly starts pulling data from repositories outside their usual scope.

- An employee transfers sensitive files to an unfamiliar domain or to themselves.

- A team member’s data usage sharply diverges from peers in the same role.

- A seemingly normal upload becomes suspicious when combined with time, location, or unusual access patterns.

These data loss indicators collected by ORION’s agents are context-based signals that suggest data may be leaving the organization inappropriately. By focusing on intent rather than policies and thresholds, ORION flags and prevents dangerous actions in real time, while minimizing false positives that frustrate employees and overload analysts.

This contextual, adaptive approach enables countermeasures against threats like deepfake remote workers. When the attacker is an employee, only tools that understand the bigger picture of behavior and intent can distinguish between normal business operations and malicious exfiltration.

Context Is the Core of Modern Data Defense

Deepfake remote workers are just one of an ever-growing list of new challenges facing security teams. They blur the line between insider risk and external attack, embedding adversaries directly into the workforce under the guise of legitimate employees. Traditional DLP tools designed for a simpler world of accidental leaks or ‘amateur’ insiders are not equipped to address this hybrid threat.

The only way forward is to embrace intent-aware, contextual defenses. By understanding how data normally flows through an organization and detecting the subtle deviations that signal something’s wrong, security teams can finally close the blind spot exploited by North Korea’s deepfake operatives and others like them.

This was exactly what led us to build ORION in a way that reduces noise, prevent real-time exfiltration, and gives security teams the advantage they need against attackers who now look just like employees.

The question you might want to ask yourself is this: If tomorrow a fake remote worker slipped through your hiring process, would I be able to spot them before sensitive data walked out the door?